- Services

- Discovery & Intelligence Services

- Publication Support Services

- Sample Work

Publication Support Service

- Editing & Translation

-

Editing and Translation Services

- Sample Work

Editing and Translation Service

-

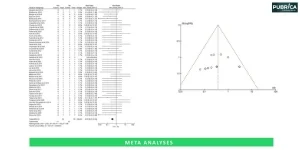

- Research Services

- Sample Work

Research Services

- Physician Writing

- Sample Work

Physician Writing Service

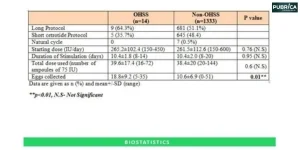

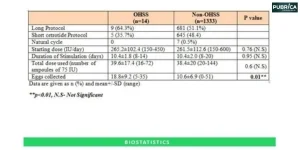

- Statistical Analyses

- Sample Work

Statistical Analyses

- Data Collection

- AI and ML Services

- Medical Writing

- Sample Work

Medical Writing

- Research Impact

- Sample Work

Research Impact

- Medical & Scientific Communication

- Medico Legal Services

- Educational Content

- Academic Editorial Services

- Educational Editorial Service

-

Education Editorial Services

-

- Industries

- Subjects

- About Us

- Academy

- Insights

- Contact Us

Challenges of AI in Research and Publication in 2026

- Home

- Academy

- Research Services

- Challenges of AI in Research and Publication in 2026

High-Impact Journals

Interesting topics

Challenges of AI in Research and Publication in 2026

1. The Expanding Role of AI in Research

2. Authorship & Intellectual Ownership Challenges in AI-Driven Research

3. Bias and Data Integrity

4. Reproducibility Crisis Intensified

5. Ethical Concerns and Misuse

6. Peer Review Disruption

7. Over-Reliance and Skill Erosion

8. Publication Inequality

9. The Path Forward

10. Conclusion

The rate at which research and academic publishing have changed can be described as radically transforming due largely to artificial intelligence (AI) technologies. By 2026, artificial intelligence in research will continue to assist in literature reviews, data collection and analysis, etc.; however, they will also provide the ability to compose complete manuscripts, perform peer reviews, and create hypotheses from already developed statements of the problem. While these changes promise improved efficiency and innovation, they also present AI research challenges for researchers, publishers, and policy makers, creating an obstacle course of ethical, methodological, and institutional concerns that need to be understood to find success during the developing era of AI technology in the research arena.[1,2]

1. The Expanding Role of AI in Research

AI is now a part of every stage of the research process (i.e., prior to data collection and analysis). AI tools have significantly reduced the amount of time and effort required for every research project by performing tasks such as creating systematic reviews and predicting the outcomes of experiments. Generative AI has also been used for writing papers, summarising large amounts of data, and suggesting citations to include in research publications. Although the use of these AI tools has increased the productivity of many researchers, the line separating human intelligence from machine contributions has become blurred, highlighting key AI challenges for researchers 2026 as AI becomes more commonplace.[1,2]

Within the publishing world, AI is used for plagiarism checking, language editing, helping with peer review processes, and providing recommendations on which journal would be best suited for publication. However, many researchers are concerned about their originality and authorship based on their reliance on AI tools and the increased use of AI tools in publishing. As AI continues to develop into more autonomous systems, it will continue to be increasingly difficult to distinguish between assisting and authoring when creating a research publication.

2. Authorship & Intellectual Ownership Challenges in AI-Driven Research

In 2026, determining the ownership of AI-generated research content has become one of the most important issues and a major part of ethical issues in AI research. When AI significantly contributes to writing and/or analysing a piece, it is not clear who should get credit as the author. The traditional criteria for assigning authorship do not adequately consider Machine Agents’ contribution. [3]

| Aspect | Traditional Research | AI-Driven Research (2026) |

|---|---|---|

| Authorship | Human researchers | Human + AI collaboration |

| Ownership | Clear intellectual ownership | Ambiguous ownership rights |

| Accountability | Assigned to authors | Shared or unclear responsibility |

| Contribution Tracking | Documented manually | Difficult to quantify AI input |

This ambiguity creates legal and ethical concerns, especially in cases of intellectual property disputes or research misconduct, further emphasizing the growing challenges of AI in research.

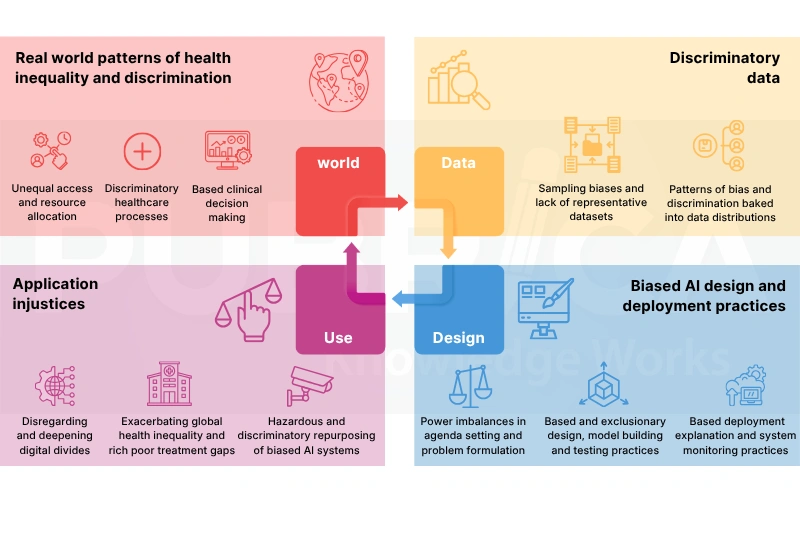

3. Bias and Data Integrity

Artificial intelligence is as good as the data that created it, which will still be true in 2026 but will also include significant amounts of ongoing bias within each area of science, including but not limited to healthcare, social sciences, and economics. Including bias in the original model can result in producing biased outputs (i.e., certain outputs can be biased towards either an under-represented population or an over-represented population), which is one of the major AI research challenges

When researchers rely on an artificial intelligence model, particularly ones that function as black box systems (i.e., the program algorithms do not provide any insight into how each step produces an output), they may inadvertently overlook their own bias. This can potentially damage both the validity and reproducibility of their research results. Ultimately, this may have the potential to impact global policies through biased outputs (i.e., false positive results or false negative results).[4]

4. Reproducibility Crisis Intensified

Reproducibility has long been a challenge in academia, but AI introduces new layers of complexity and represents significant AI challenges for researchers 2026. AI models often depend on proprietary algorithms, undisclosed datasets, or dynamic learning systems that evolve. [5]

| Factor | Traditional Research Issues | AI-Related Complications |

|---|---|---|

| Data Availability | Limited access | Proprietary datasets |

| Method Transparency | Documented methods | Black-box algorithms |

| Experiment Replication | Possible with effort | Often difficult or impossible |

| Model Variability | Minimal | High due to continuous learning |

As a result, replicating AI-driven studies becomes significantly more difficult, raising concerns about scientific reliability.

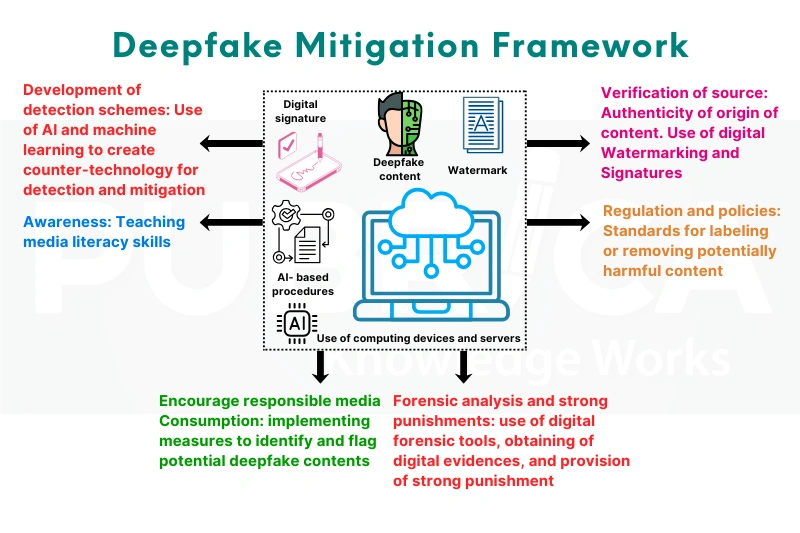

5. Ethical Concerns and Misuse

In recent years, the abuse of artificial intelligence (AI) technology in scientific research has become a significant issue and highlights critical ethical issues in AI research. While the occurrence of fraudulent, AI-generated research articles has significantly increased in the year 2026, the vast number of AI-generated research papers presents a major risk to the integrity of academic publishing because of how easily it is to produce “faked” data and to falsify references.[6]

Because AI-generated texts appear so convincingly to be valid data, it will be increasingly difficult for scientists to identify fraudulent publications without additional support in the peer-review process. The issue is compounded when considering the potential for negative, unintended consequences when AI is used in sensitive areas of research, such as genetic engineering or defines industry-related fields.

6. Peer Review Disruption

The process of peer review, an essential part of publication in academia, is in the process of change. This is because AI is now being utilised in the process of peer review in the following ways: assisting reviewers in the process of summarising the articles, pointing out errors in the articles, and even in decision-making itself—further illustrating artificial intelligence in research.[7]

However, there is a possibility of over-reliance on AI in the process of peer review, which is one of the emerging challenges of AI in research, as it may overlook the nuances of arguments and ideas presented in the articles.

| Peer Review Element | Human Review | AI-Assisted Review |

|---|---|---|

| Evaluation Depth | Contextual and nuanced | Pattern-based analysis |

| Speed | Time-consuming | Rapid processing |

| Bias | Subjective bias | Algorithmic bias |

| Transparency | Limited | Often opaque |

This shift raises questions about the future role of human expertise in evaluating research quality.

7. Over-Reliance and Skill Erosion

However, as the power of AI tools grows, researchers stand the risk of becoming too dependent on the use of the tools. In 2026, the worry about the loss of skills is rising, especially for early-stage researchers, making it a key part of AI challenges for researchers 2026.

The skills in critical thinking, research design, and analysis could deteriorate as researchers become too dependent on the use of the tools. The dependency could lead to the deterioration of the skills needed in the development of scientific innovations.

8. Publication Inequality

However, the availability of AI tools is not the same in all countries of the world, which adds to the broader AI research challenges globally. Institutions with more resources can utilize the latest AI tools and get a competitive advantage in terms of research and publication. [8]

This situation is adding fuel to the fire of existing inequalities in the field of academics. Researchers in the developing world might find it hard to compete with the rest of the world.

| Dimension | High-Resource Institutions | Low-Resource Institutions |

|---|---|---|

| AI Access | Advanced tools available | Limited or no access |

| Publication Output | High | Lower |

| Research Speed | Accelerated | Slower |

| Global Visibility | Strong | Limited |

9. The Path Forward

However, despite all these challenges, AI is a powerful technology that has tremendous potential for research. It is important that all these challenges be addressed through a combined effort from all stakeholders involved in overcoming challenges of AI in research. It is vital that guidelines be developed for AI authorship and that transparency be ensured in AI algorithms. In addition, the need to use ethical AI must be encouraged to address ongoing ethical issues in AI research.

The educational sector is also bound to change and ensure that researchers are trained to use AI in a responsible manner and at the same time maintain their critical thinking skills.

Connect with us to explore how we can support you in maintaining academic integrity and enhancing the visibility of your research across the world!

Conclusion

In the year 2026, the field of research and publication has been transformed in the most significant way through the introduction of Artificial Intelligence. It has brought with it several opportunities and AI research challenges. The future of research is in the hands of how effectively the field addresses the AI challenges for researchers 2026 in the coming years. By encouraging transparency and responsibility in the field of research and publication, the full potential of artificial intelligence in research can be utilized.

Challenges of AI in Research and Publication in 2026. Our Pubrica consultants are here to guide you. [Get Expert Publishing Support] or [Schedule a Free Consultation]

References

- Morino, E., & Tokita, D. (2026). AI in clinical trials: Status, challenges, and future directions for emergency infectious disease clinical trials -Insights from the 2025 iCROWN Symposium. Global health & medicine, 8(1), 70–71. https://doi.org/10.35772/ghm.2026

- Hosseini, M., Murad, M., & Resnik, D. B. (2026). Benefits and Risks of Using AI Agents in Research. The Hastings Center report, 56(1), 13–17. https://doi.org/10.1002/hast.70025

- Yousaf M. N. (2025). Practical Considerations and Ethical Implications of Using Artificial Intelligence in Writing Scientific Manuscripts. ACG case reports journal, 12(2), e01629. https://doi.org/10.14309/crj.0000000

- Althubaiti A. (2016). Information bias in health research: definition, pitfalls, and adjustment methods. Journal of multidisciplinary healthcare, 9, 211–217. https://doi.org/10.2147/JMDH.S10480

- Chakravorti, T., Koneru, S., & Rajtmajer, S. (2025). Reproducibility and replicability in research: What 452 professors think in Universities across the USA and India. PloS one, 20(3), e0319334. https://doi.org/10.1371/journal.pone

- Tulchinsky T. H. (2018). Ethical Issues in Public Health. Case Studies in Public Health, 277–316. https://doi.org/10.1016/B978-0-12-804571-8.00027-5

- Proctor, D. M., Dada, N., Serquiña, A., & Willett, J. L. E. (2023). Problems with Peer Review Shine a Light on Gaps in Scientific Training. mBio, 14(3), e0318322. https://doi.org/10.1128/mbio.03183-22

- Arcaya, M. C., Arcaya, A. L., & Subramanian, S. V. (2015). Inequalities in health: definitions, concepts, and theories. Global health action, 8, 27106. https://doi.org/10.3402/gha.v8.2710