- Services

- Discovery & Intelligence Services

- Publication Support Services

- Sample Work

Publication Support Service

- Editing & Translation

-

Editing and Translation Services

- Sample Work

Editing and Translation Service

-

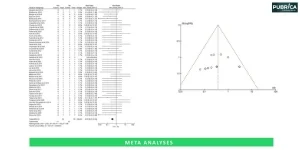

- Research Services

- Sample Work

Research Services

- Physician Writing

- Sample Work

Physician Writing Service

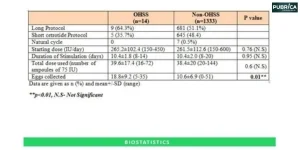

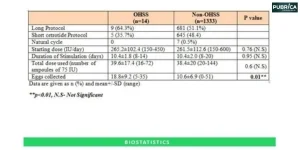

- Statistical Analyses

- Sample Work

Statistical Analyses

- Data Collection

- AI and ML Services

- Medical Writing

- Sample Work

Medical Writing

- Research Impact

- Sample Work

Research Impact

- Medical & Scientific Communication

- Medico Legal Services

- Educational Content

- Academic Editorial Services

- Educational Editorial Service

-

Education Editorial Services

-

- Industries

- Subjects

- About Us

- Academy

- Insights

- Contact Us

Systematic Review Automation Tools: A Complete Guide for Researchers

- Home

- Academy

- Research Services

- Systematic Review Automation Tools: A Complete Guide for Researchers

High-Impact Journals

Interesting topics

Systematic Review Automation Tools: A Complete Guide for Researchers

Systematic review automation (SRA) tools are designed to streamline the labour-intensive stages of evidence synthesis. Systematic reviews are often viewed as a resource-intensive process, consuming vast amounts of time, but AI, machine learning, and natural language processing have given rise to many automated tools that support the automation of synthesising evidence.[1]

This guide explains how these systems work, provides an overview of the most widely used systematic review automation tools, and discusses the applications, benefits, and practical issues related to each of these tools.

1. Why Use Automation in Systematic Reviews?

INSIGHT :

Machine learning-assisted screening can reduce workload by up to 40–50% while maintaining near-complete recall.[5]

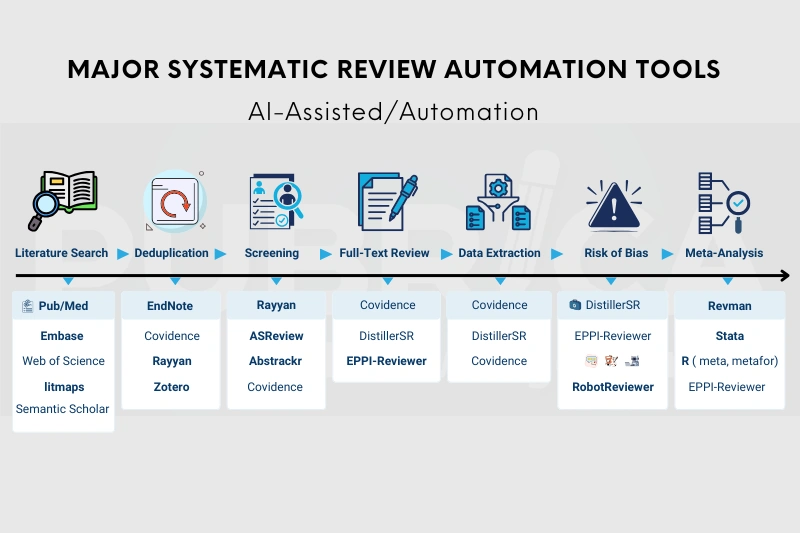

2. Major Systematic Review Automation Tools

The following platforms are widely regarded as Best systematic review tools for researchers seeking efficiency, transparency, and structured workflows.

2.1. Rayyan – AI-Assisted Screening

Rayyan is a web-based platform that enables blinded selection via integrated artificial intelligence to order the records according to the decision of the reviewers. Rayyan is widely used among Systematic review screening tools because of its AI-driven prioritisation capabilities.

Best for:

- Collaborative selection of titles.

- Detection of duplicates

- Resolution of conflicts

Rayyan’s predictive inclusion ranking can help alleviate the burden on reviewers while providing a measure of transparency. [6]

2.2. AS Review – Active Learning Screening

By employing adaptive learning algorithms, AS Review improves predictions by continually refining them based on the reactions of reviewers as they grade documents.

Key features:

- AS Review is an open-source platform

- Highly adjustable ML algorithms

- Very effective for large datasets

TIP:

Use AS Review after clearly defining inclusion criteria to avoid early-stage algorithm

2.3. Covidence – End-to-End Review Management

Covidence offers:

- Citation Import & Deduplication

- Dual Screening Workflows

- Data Extraction Templates

- PRISMA Flow Diagram Generation

Cochrane Reviews widely use Covidence because it provides a good design for doing the work required to produce the review in a structured process.

2.4. DistillerSR – Enterprise-Level Review Automation

DistillerSR is an electronic platform that enables complex, or regulatory quality, systematic reviews.

Strengths:

- Custom forms for the extraction of data

- Auditing capability (audit trail)

- Risk of bias subcriteria (e.g., Series of Trials)

DistillerSR also integrates advanced Research data extraction tools that support structured and auditable evidence synthesis. It is proven to be useful in the pharmaceutical and health technology assessment arenas.

2.5. RobotReviewer – Automated Risk of Bias Assessment

RobotReviewer uses NLP to identify the characteristics of trials and automatically evaluate the risk of bias domains in accordance with Cochrane systematic review criteria.[7]

2.6. EPPI-Reviewer – Advanced Evidence Synthesis

EPPI-Centre has developed EPPI-Reviewer, which is an integrated text mining, screening, and data extraction tool used for systematic reviews in education and social science.

2.7. Abstrackr – Free ML Screening Tool

Abstrackr uses a semi-automated method of screening and is especially useful for academic researchers who are on a tight budget

Example

A clinical research team conducting a cardiovascular meta-analysis used Covidence for screening, ASReview for prioritisation, and Robot Reviewer to pre-populate bias assessments—reducing project time by nearly eight weeks.

3. Benefits of Using Named Tools

Researchers who use specific tools will benefit from being able to:

- Directly compare attributes

- Select appropriate tools based on the size of their project

- Align with funding/regulatory requirements

- Increase reporting transparency

Insight:

Hybrid workflows (AI + human review) consistently outperform fully manual or fully automated approaches.

4. Limitations to Consider

While the benefits listed above are considerable, there are also some challenges associated with them. The following represents only a few examples:

- Algorithms may have bias due to insufficient training.

- Subject-matter expertise cannot be duplicated by AI.

- The cost of different subscriptions varies (with Covidence and DistillerSR costing more).

- There may be a significant overhead associated with learning to use open-source tools.

Automation can improve our work, but it will never be able to match the quality of human judgment. [1]

Best Practices When Using Automation Tools

- Pilot test tools before full deployment

- Use dual human verification

- Document tool name, version, and settings

- Report AI usage clearly in methodology

Connect with us to explore how we can support you in maintaining academic integrity and enhancing the visibility of your research across the world!

Conclusion

Automated tools for systematic reviews are very beneficial to researchers. Some of the best tools available that use artificial intelligence to help with evidence synthesis include Rayyan, ASReview, Covidence, DistillerSR, Robot Reviewer, EPPI-Reviewer, and Abstrackr. Using these tools together can also help improve efficiency, reproducibility, and transparency for researchers while allowing them to have total control over their judgment and interpretation of the information involved in a systematic review.

Streamline Your Systematic Review Empower your research with Pubrica — reduce workload, increase reproducibility, and focus on insights, not repetitive tasks.[Explore Our SRA Support Services] or [Schedule a Free Consultation].

References

- Tsafnat, G., Glasziou, P., Choong, M. K., Dunn, A., Galgani, F., & Coiera, E. (2014). Systematic review automation technologies. Systematic reviews, 3, 74. https://doi.org/10.1186/2046-4053-3-74

- Marshall, I. J., & Wallace, B. C. (2019). Toward systematic review automation: a practical guide to using machine learning tools in research synthesis. Systematic reviews, 8(1), 163. https://doi.org/10.1186/s13643-019-1074-9

- O’Mara-Eves, A., Thomas, J., McNaught, J., Miwa, M., & Ananiadou, S. (2015). Using text mining for study identification in systematic reviews: a systematic review of current approaches. Systematic reviews, 4(1), 5. https://doi.org/10.1186/2046-4053-4-5

- Bannach-Brown, A., Przybyła, P., Thomas, J., Rice, A. S. C., Ananiadou, S., Liao, J., & Macleod, M. R. (2019). Machine learning algorithms for systematic review: reducing workload in a preclinical review of animal studies and reducing human screening error. Systematic reviews, 8(1), 23. https://doi.org/10.1186/s13643-019-0942-7

- van de Schoot, R., de Bruin, J., Schram, R., Zahedi, P., de Boer, J., Weijdema, F., Kramer, B., Huijts, M., Hoogerwerf, M., Ferdinands, G., Harkema, A., Willemsen, J., Ma, Y., Fang, Q., Hindriks, S., Tummers, L., & Oberski, D. L. (2021). An open source machine learning framework for efficient and transparent systematic reviews. Nature Machine Intelligence, 3(2), 125–133. https://doi.org/10.1038/s42256-020-00287-7

- Marshall, I. J., Noel-Storr, A., Kuiper, J., Thomas, J., & Wallace, B. C. (2018). Machine learning for identifying Randomized Controlled Trials: An evaluation and practitioner’s guide. Research synthesis methods, 9(4), 602–614. https://doi.org/10.1002/jrsm.1287

- Marshall, I. J., Kuiper, J., & Wallace, B. C. (2016). RobotReviewer: evaluation of a system for automatically assessing bias in clinical trials. Journal of the American Medical Informatics Association : JAMIA, 23(1), 193–201. https://doi.org/10.1093/jamia/ocv04